- ◆AI does not lie to you on purpose. It just cannot tell the difference between right and wrong.

- ◆This is called hallucination and it happens on every AI tool, every single day.

- ◆In property work the consequences of unchecked AI output are not just embarrassing.

- ◆This guide explains exactly why it happens and the four checks that catch it before it matters.

Why AI Gets Things Wrong and How to Catch It Before It Costs You

In the first guide in this series we covered what AI actually is. Software that predicts text based on patterns. Not a brain. Not a search engine. A fast, capable first-draft machine that works best with a human checking what comes back.

That last part is what this post is about.

AI gets things wrong. Confidently, calmly, and without any sign that it is doing so.

For most people that is occasionally annoying. For property professionals dealing with legislation, compliance, financial figures, and regulated advice, it is something worth understanding properly before you rely on it for anything that matters.

Hallucination Sounds Like Sci-Fi. It Is Not.

Hallucination is your AI casually saying something wrong with a straight face.

A made-up statistic. A fabricated quote. An outdated regulatory reference presented as current. All delivered with the same confident tone it uses when it is right.

It happens because of something fundamental about how these tools work.

AI does not look things up. It predicts what a useful response looks like based on patterns in its training data. Word by word. Each one chosen because it is the most statistically likely next word given everything before it.

That process produces plausible text. Not accurate text.

Like that mate at the pub who sounds completely certain but has half the details wrong. Except this mate never admits it.

The difference that matters

Plausible means it sounds like it could be right. Accurate means it is right. AI produces plausible. You need accurate. That gap is what this entire post is about. For plain English definitions of any AI term that comes up, the No Jargon AI Jargon Buster has you covered.

Stanford HAI research found that leading AI models can produce confidently wrong answers in 69% to 88% of factual legal queries. Source

Why Property Gets Burned Worse Than Most

Every industry has some exposure to AI errors. Property has more than most.

Three reasons.

First, the regulatory environment changes constantly. Legislation that was accurate six months ago may not be now. AI does not update itself. It knows what it was trained on and nothing that happened after.

Second, the consequences in property are professional rather than just reputational. A wrong figure in a suitability letter, an outdated compliance reference in a client document, or incorrect tenancy law advice is not just embarrassing. It has professional, regulatory, and in some cases legal implications.

Third, the volume of communication is high. The more you use AI, the more opportunities there are for an unchecked error to slip through. One wrong email to a client is one thing. The same wrong information repeated across forty portal lead responses is another.

Take Section 21. A letting agent gets a query from a landlord asking how to proceed. AI confidently explains the full process, step by step. The problem: Section 21 rules have changed significantly and continue to change. AI may be working from an earlier version of the law. The landlord follows the advice. It does not apply. That is not a hypothetical risk. That is what happens when you trust training data on a question where the answer has moved.

AI’s Four Favourite Property Mistakes

Legislation and Regulation

The highest risk category. Full stop.

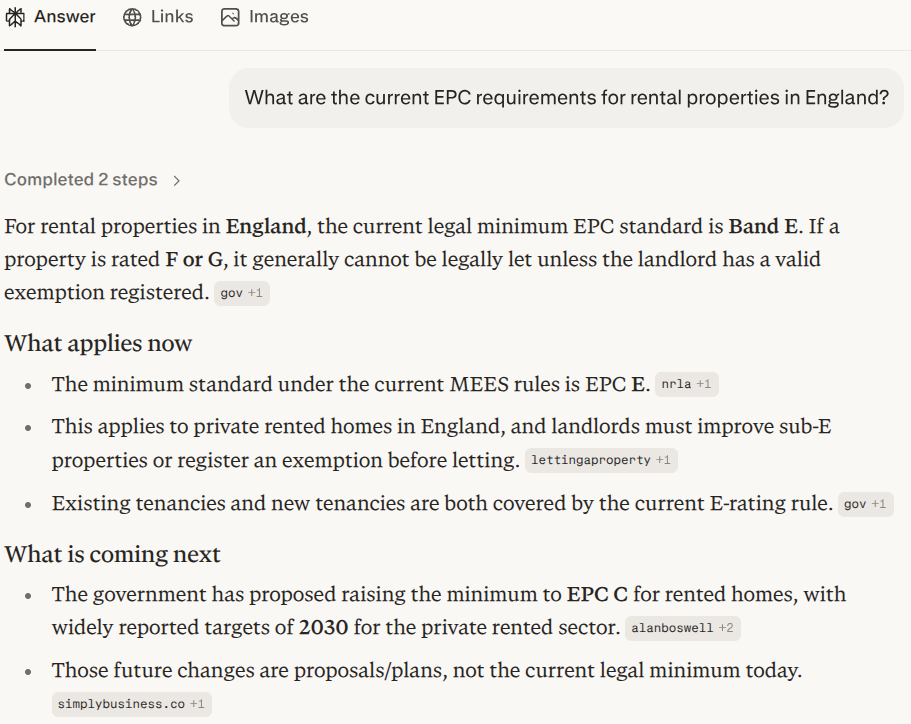

AI training data has a cut-off date. Property legislation changes regularly. Section 21, EPC requirements, stamp duty thresholds, AML regulations, FCA rules. All subject to change. All things AI will answer confidently whether or not its information is current.

Section 21 rules have changed. AI may not know that yet. Gov.uk always will. Always ask gov.uk.

Statistics and Figures

Ask AI for a statistic and it will give you one.

Whether that statistic exists is a separate question entirely.

AI will generate numbers that look plausible for the context. House price growth percentages, mortgage approval rates, market share figures. They will sound specific and credible. Some will be real. Some will be entirely made up. It will not flag which is which.

“UK house prices grew 7.3% last quarter.” That sounds real. It might be. It might also be something the model generated because 7.3% sounded like the right kind of number for that sentence. Always find the original source.

Quotes and Attribution

AI is particularly unreliable with quotes. Ask it for something a named person said and it will produce something. Whether they actually said it is not guaranteed.

This matters if you are writing content, preparing client presentations, or drafting anything with attributed quotes. Check them.

Dates and Timelines

AI gets dates wrong more often than you would expect. This includes when legislation came into force, key deadlines, and the timing of specific events. Anything date-sensitive needs checking independently.

You Are Thinking: Can’t They Just Fix This?

They can’t. Here’s why.

Models are improving and accuracy is getting better over time. But hallucination is not a glitch they forgot to patch. It is a property of how language models work.

AI generates language by predicting what comes next. That process will always produce some level of confident wrongness because the model has no way to verify what it is saying against reality in real time. It only has its training data, and training data contains errors, gaps, and information that has since changed.

It is getting better. Slowly. About as slowly as your slowest vendor solicitor.

The checking habit is permanent. Not a workaround until they fix it.

Your Four-Check Safety Net

No 47-step compliance checklist. Just four habits that stop you getting burned.

1. Read It Before You Send It

Sounds obvious. Still the most skipped step.

Every piece of AI output that leaves your desk should be read by a human first. Not skimmed. Read. The errors that cause real problems are almost always ones a ten-second read would have caught.

If you are too busy to read it before sending it, you are too busy to use AI safely.

2. Check Any Specific Claim You Did Not Already Know

One question. Did you already know that to be true?

If yes, probably fine. If no, spend thirty seconds checking it before it goes anywhere. That single question catches most of the errors that actually matter.

3. Never Use AI for Compliance Without Verification

Non-negotiable.

AML, FCA regulations, tenancy law, stamp duty, EPCs, right to rent. Any AI-generated content touching these areas needs checking against a current authoritative source before it goes near a client. Every time.

AI is useful for drafting the structure of a compliance document. It is not the compliance check itself.

4. Use Perplexity When You Need Current Information

Most AI tools are drawing on training data with a cut-off date. If you need something current, use Perplexity instead. It searches the web in real time and shows you exactly where the answer came from. You can verify it in one click. Perfect for anything time-sensitive like legislation changes or current market stats.

What Needs Checking and How Much

Not everything needs the same level of scrutiny. Here is a simple way to think about it.

| Task Type | Check Needed | Property Example |

|---|---|---|

| Low risk | Read before sending | Portal lead replies, property descriptions, social captions |

| Medium risk | Verify specific dates and figures | Sales progression updates, client timeline emails |

| High risk | Check against authoritative source | EPC guidance, AML references, FCA compliance, tenancy law |

This is not rocket science. It is just not skipping steps you would not skip with anything else important.

The Short Version

AI is useful. It is also unreliable in specific, predictable ways.

The people who get caught out are not the people who used it for the wrong things. They are the people who forgot to check.

Build the habit. Keep the time savings. Avoid the consequences.

Try This Before the End of the Week

Two minutes. Open ChatGPT or Claude and ask something you need to know for a current case. A legislative question, a compliance point, a figure that needs to be accurate.

Then check it against gov.uk or the relevant authoritative source.

Not because you expect it to be wrong. Because you want the habit in place before it matters rather than after.

- Read every AI draft before it leaves your desk

- Check any specific claim you did not already know to be true

- Verify compliance references against gov.uk or your trade body

- Use Perplexity for anything where being current matters

10 AI Prompts Every Property Professional Should Steal

Ten copy-paste prompts built for estate agents, letting agents, mortgage brokers and tradespeople. No AI experience needed. No faff.

Get the Free Prompts → Only Email Signup NeededQuestions People Actually Ask

What is AI hallucination in simple terms?

When AI produces something confidently wrong. A made-up stat, a fabricated quote, an outdated regulatory reference. It happens because AI generates plausible text based on patterns, not accurate text based on verified facts. It has no way of knowing the difference.

How often does AI get things wrong?

Often enough that you should never trust AI output on anything important without checking it. The rate varies by task and tool but no current AI model is reliable enough to use unsupervised for anything with professional or regulatory consequences.

Is AI getting better at accuracy?

Yes, gradually. But hallucination is a fundamental property of how these systems work, not a bug they are about to fix. The checking habit is permanent.

What AI mistakes matter most for estate agents and letting agents?

Legislation and regulation are the highest risk. Tenancy law, EPC requirements, right to rent, and Section 21 changes are exactly the kind of thing AI may have outdated or wrong. Always verify regulatory content against current authoritative sources.

What AI mistakes matter most for mortgage brokers?

Anything touching FCA regulations, suitability requirements, product details, or financial figures. AI-generated content in these areas needs checking against current authoritative sources before it goes near a client or a compliance file.

Can I use AI for compliance documents in property?

You can use AI to draft the structure and language. You cannot use AI as the compliance check itself. Every specific regulatory reference needs verifying against a current authoritative source before the document is used.

How to Write a Good AI Prompt (The Difference Is Bigger Than You Think)

You now know what AI is and why it gets things wrong. The next guide covers the part that changes your results most: how you ask. A clear brief versus a vague one produces completely different output from the same tool. Here is exactly what that looks like in practice.

Read Part 3 →